|

CrawlTrack, webmaster dashboard.

Web analytic and SEO CrawlProtect, your website safety. Protection against hacking, spam and content theft Two php/MySQL scripts, free and easy to install

The tools you need to manage and keep control of your site. |

|

Web analytic and SEO

CrawlProtect, your website safety.

Protection against hacking, spam and content theft

Two php/MySQL scripts, free and easy to install

CrawlProtect documentation

The documentation is still under construction and new informations are constinuously added. If you don't find the answer for your question, don't hesitate to contact the support team, for that the best is to use the forum.

Install CrawlProtect

1) Unzip the downloaded file.

2) Upload on your website the crawlprotect folder (you can change his name, but you should not do it once the htaccess file is created), your CrawlProtect url will be: www.yoursite.com/crawlprotect/.

3) Go to your CrawlProtect url and then choose your language and follow the instruction to proceed to the installation.

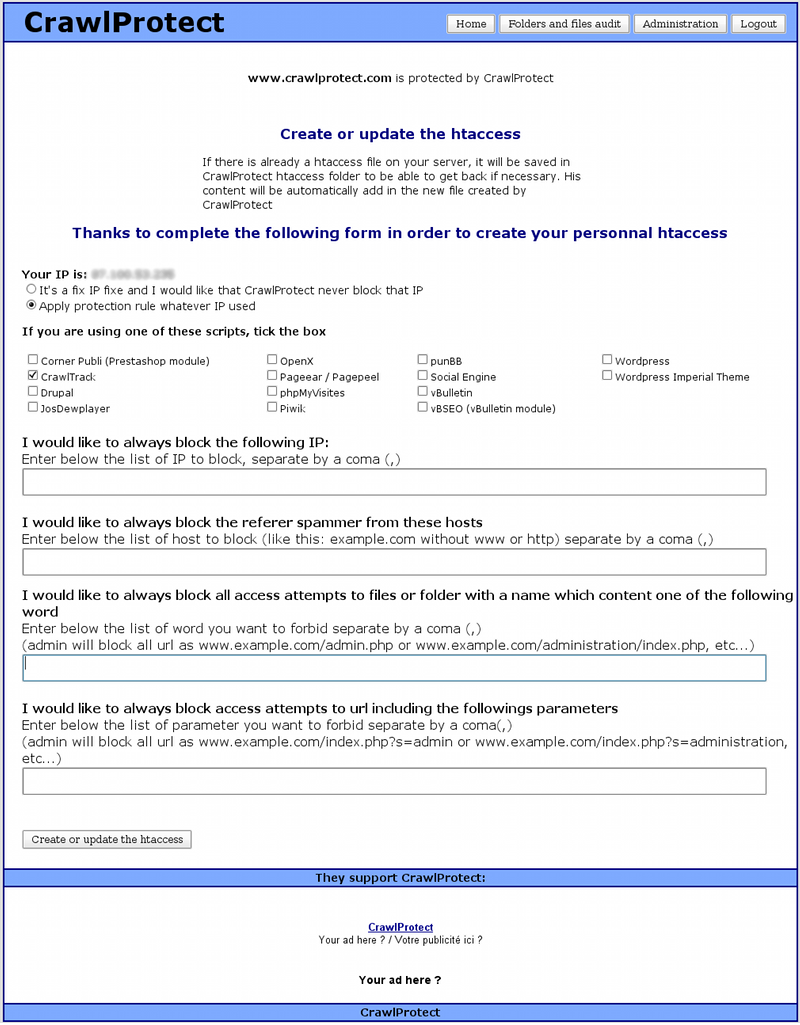

4) Once logued, the first thing to do is to go to the administration page to create your htaccess file. Choose the parameters according your site informations and create your own htaccess file. Don't worry, if you had already a htaccess file in place, CrawlProtect will grab it's content and include it in the new file.

5) Then you have to add the proposed php tag on your site pages, you will find here some details indications on how to place it (it's like the CrawlTrack tag).

6) Then you can change your folders and files chmod level directly from the CrawlProtect interface. You just need to check that there is no disfunctionment of your site with these new values.

7) That's all, CrawlProtect is protecting your website. To test if everything is OK, try to visit an url like this : http://www.yoursite.com/index.php?site=http://www.test.com (replace yoursite.com with your website name). You should be blocked.

Update of a previous release

1) Unzip the downloaded file.

2) Upload on your website the new files in your CrawlProtect folder taking care that your ftp transfert software is set-up to overwrite the existings files (be carefull also at the chmod level of the files, check that the transfert has been successful).

3) Go to your CrawlProtect url, the upgrade process will start automatically.

4) Once logued, the first thing to do is to go to the administration page to recreate your htaccess file in order to take profit of the new features

5) That's all, CrawlProtect is updated and is protecting your website.

Using CrawlProtect

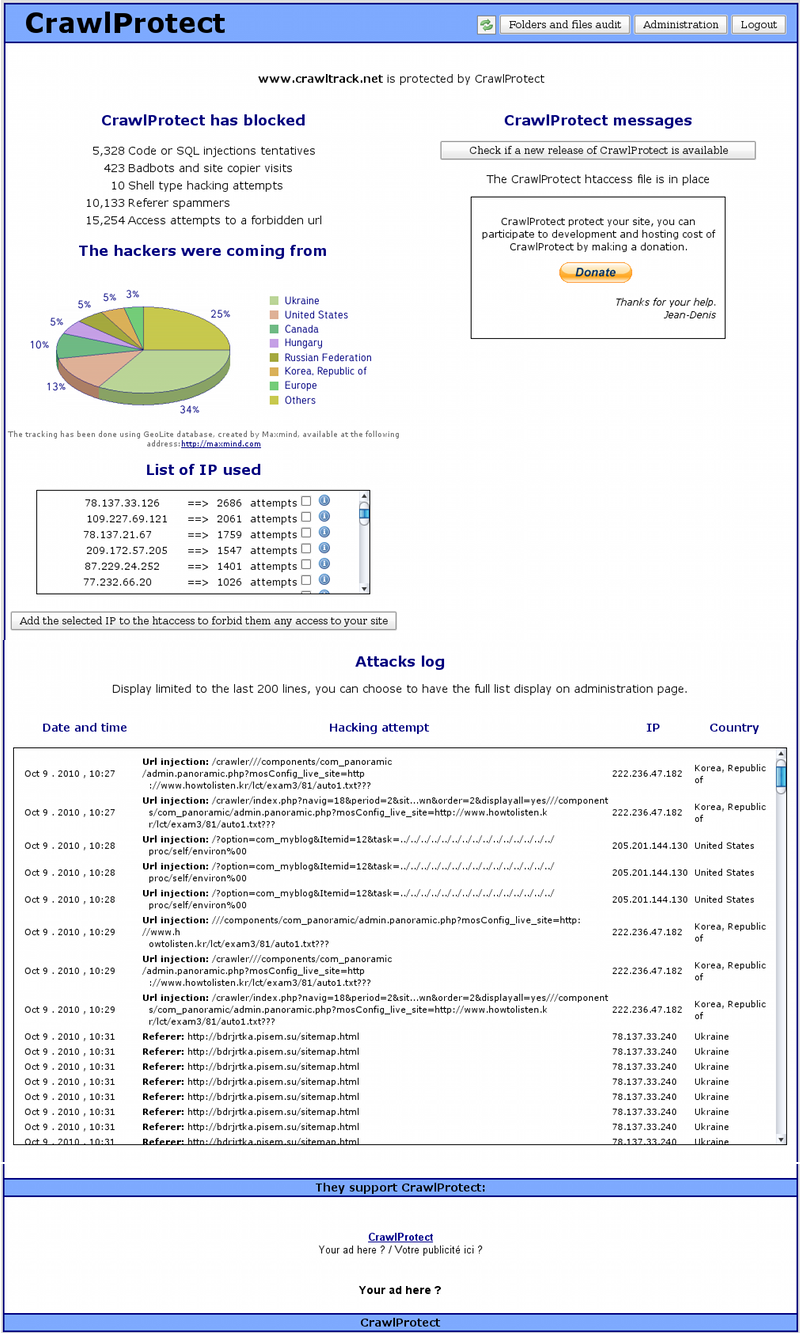

CrawlProtect is running alone, you don't need to do anything after installation. If you want to know howmany hacking attempts have been blocked by your CrawlProtect, visit your CrawlProtect url: http://www.votresite.com/crawlprotect/ which is protected by a password.

You will see how many hacking attempts have been blocked by CrawlProtect.

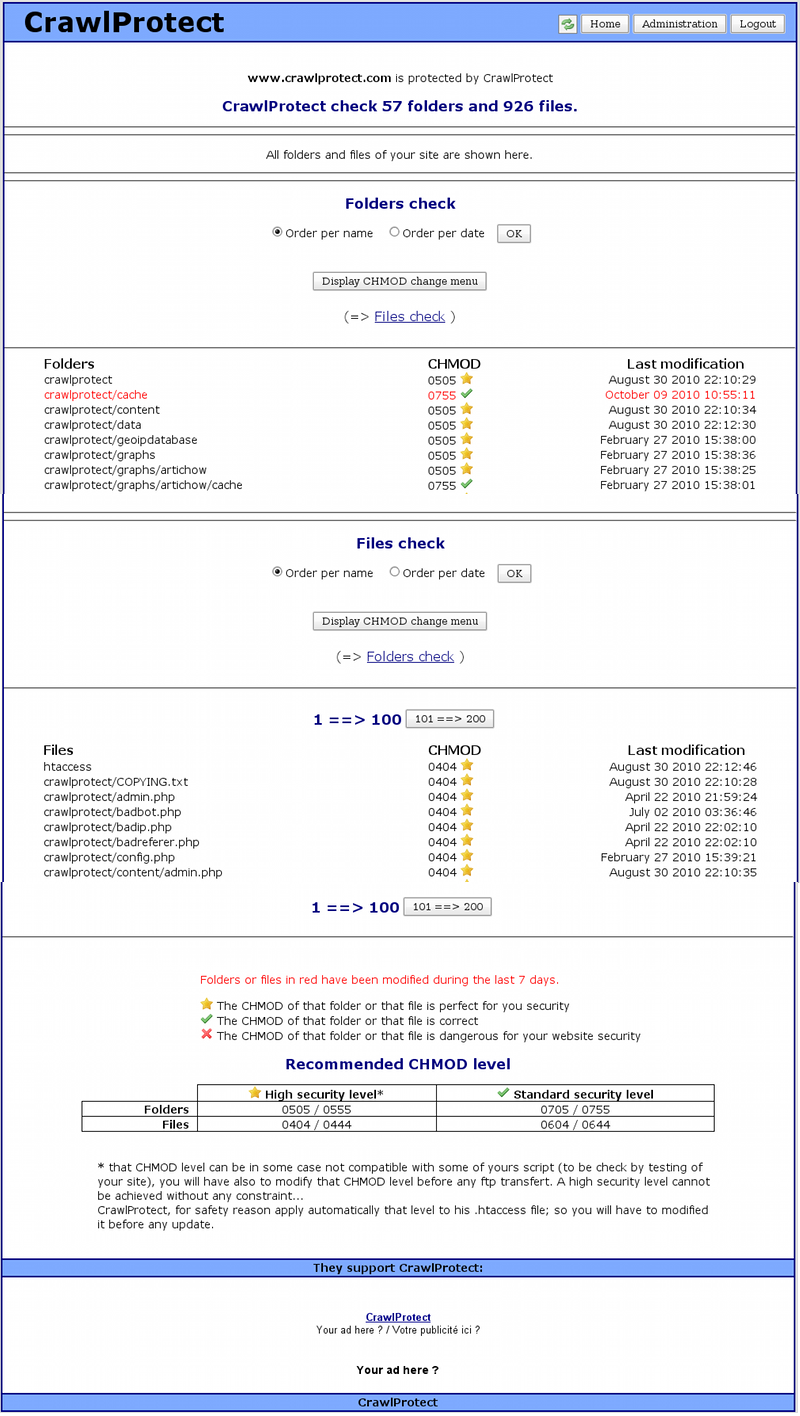

You will be able to check any files or folders modification and you will also be able to choose in a few clics the best chmod for your folders and files.

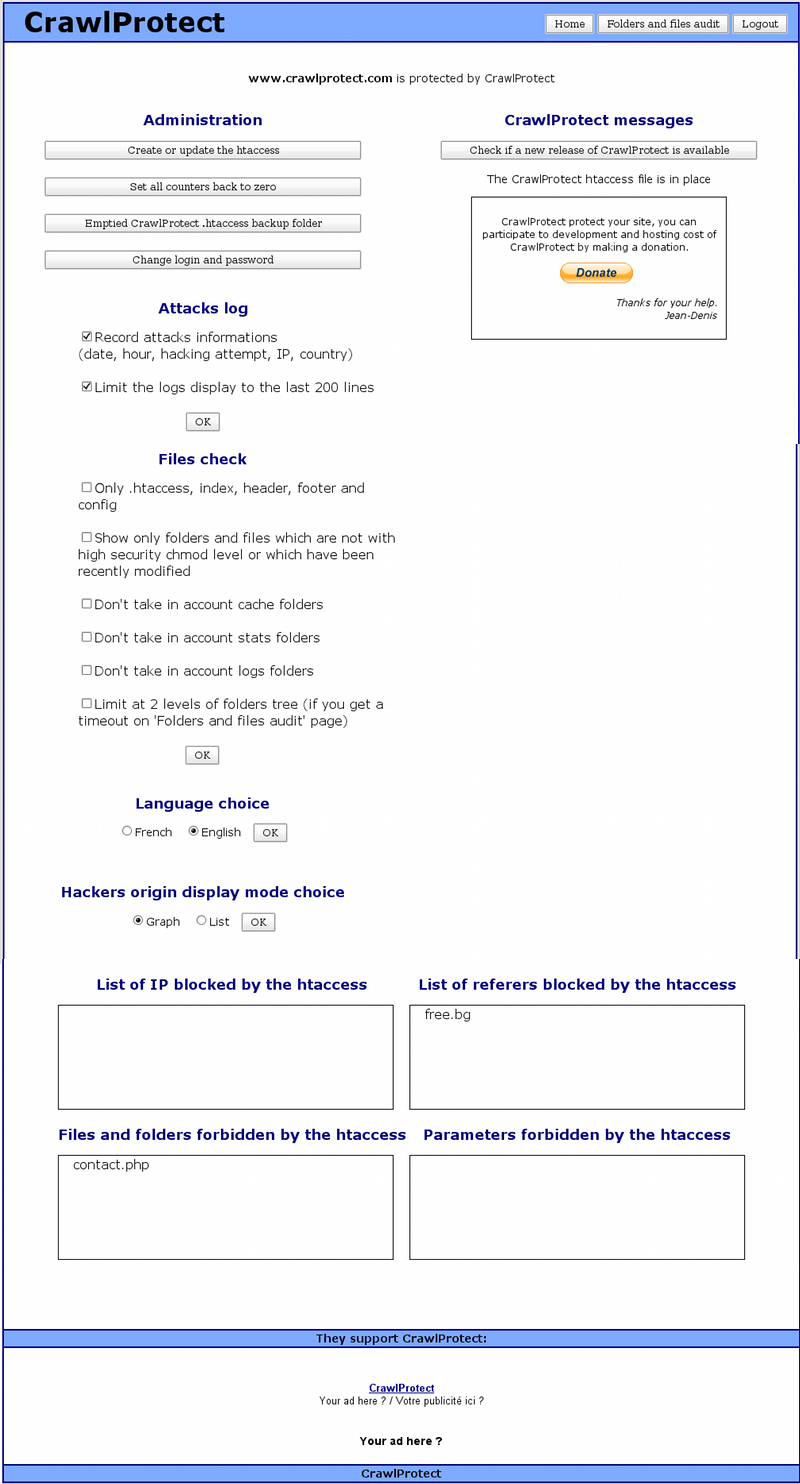

On the administration page you can change your CrawlProtect parameters.

In a few click you can built your own htaccess file.

After the creation of CrawlProtect htaccess, I get an error 500 on my site, what to do?

1) With your ftp software, copy the htaccess created by CrawlProtect.

2) Change to 604 the CHMOD of the htaccess created by CrawlProtect

3) Suppress the htaccess created by CrawlProtect

4) Go on CrawlProtect admin page and click on "Suppress the CrawlProtect htaccess" et validate the suppression. Your site is now back.

5) Contact CrawlProtect support joining a copy of the CrawlProtect htaccess giving issue.

After the creation of CrawlProtect htaccess, some function of my site are not working as usual, what to do?

1) Have a look at CrawlProtect logs (on the script homepage).

2) Take note of the blocage causes listed.

3) Waiting that the documentation is completed, contact CrawlProtect support giving the url of your site and the detail list of the blocages causes.

When I click on "Folders and files audit" I get a blanck page, what to do?

The process to list your folders and files use a lot of ressources, even more if your site is counting a lot of different folders and files.

If you get a blanck page it's usually because the script has run longer than the maximum autorized time and has been kill by the server. You have different solutions to solve that issue.

1) You have access to your php parameters (it's the case for a dedicated server but not for a share hosting). You can then increase the value of max_execution_time in your php.ini until you find the right value.

2) If you cannot modify your php parameters, you can on the CrawlProtect admin page limit the number of folders and files to audit. You can audit only .htaccess, index, header, footer and config files, or only folders and files which are not with a high security chmod level or which have not been recently modified, ot limit to 2 levels of the folders tree. It's by testing different option that you will find the best setting for your site.

When I want to access my CrawlProtect page, I'm redirect to my site homepage, what to do?

CrawlProtect to check his tag presence display your site homepage in an invisible Iframe, and it look like that you have a script to avoid the display of your site inside an Iframe.

You have two solutions to solved that issue:

1) Remove that script (just for the time of CrawlProtect installation and tag presence check).

2) Modified CrawlProtect by replacing the header.php file (include folder) with that one. The tag presence check will need you to visit your site once that one is in place (to create the validation cookie).